Sparx Assessments

Built a 0-1 assessment product from scratch. 450 schools trusted it with their GCSEs in the first term. High stakes, tight timeline.

Role: Lead Product Designer

Timeline: 6 months

Impact: 450 schools, 88% workload improvements

GCSEs are a big deal

For students, they unlock the next stage of education. For teachers, they’re the benchmark they’ll be judged by.

With teachers already stretched thin, any new tool must do more than save time—that’s table stakes. It must make their lives easier.

This was the ambition of Sparx Assessments.

The starting point wasn’t pretty

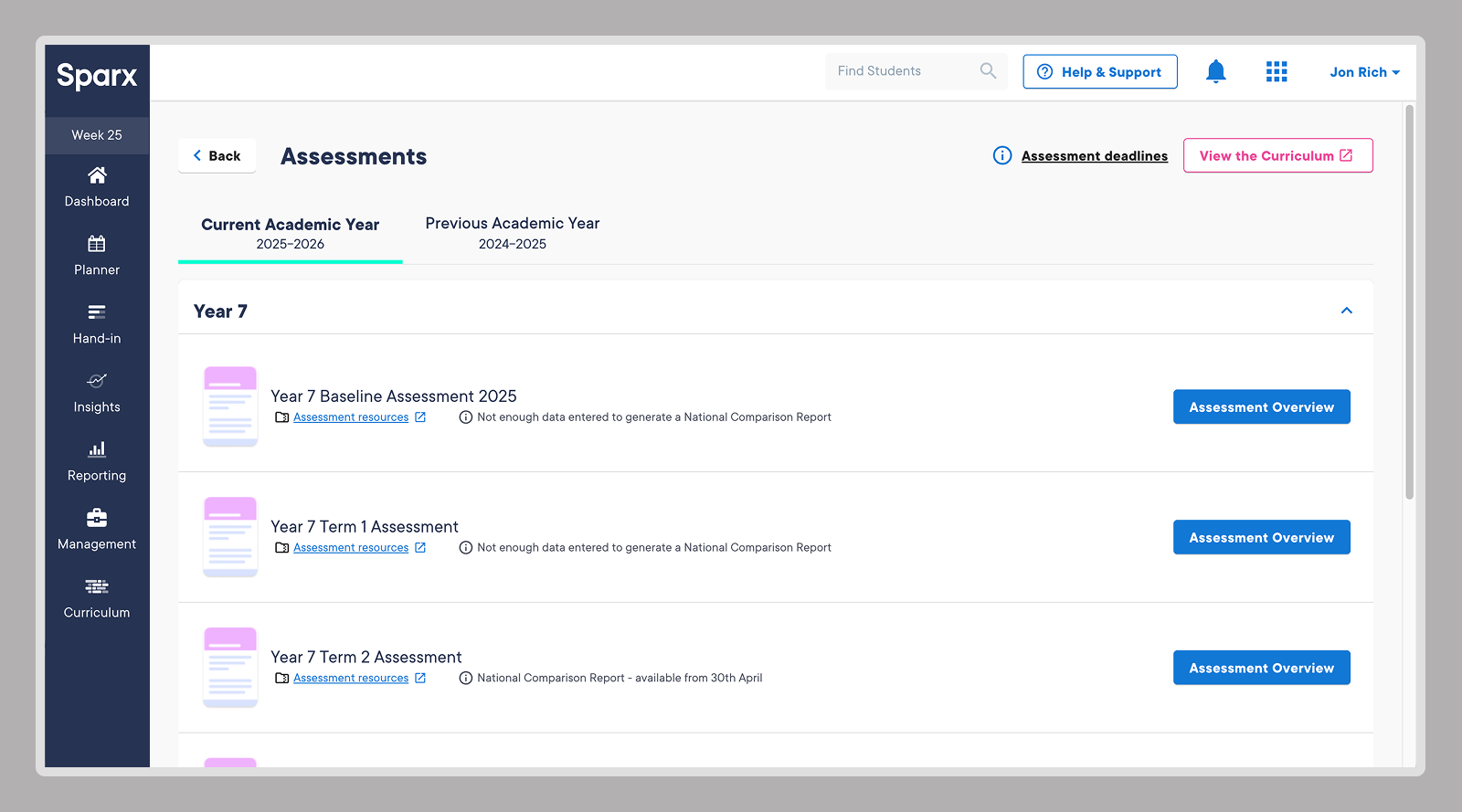

Sparx already had a proof-of-concept for assessments buried inside Sparx Maths. A handful of schools had tested it. Their appetite was large enough that we spun up a team to pull it out and build it properly.

I led the design.

The breakthrough

I ran workshops with senior stakeholders, subject advisors, and teachers. Mapped pain points. Talked about the long-term vision.

And I uncovered gold.

Teachers didn’t just see assessments as “homework but formal.” Their mental model was completely different. Assessments happened at strategic points throughout the year—tied to GCSE mock timelines, not weekly schedules.

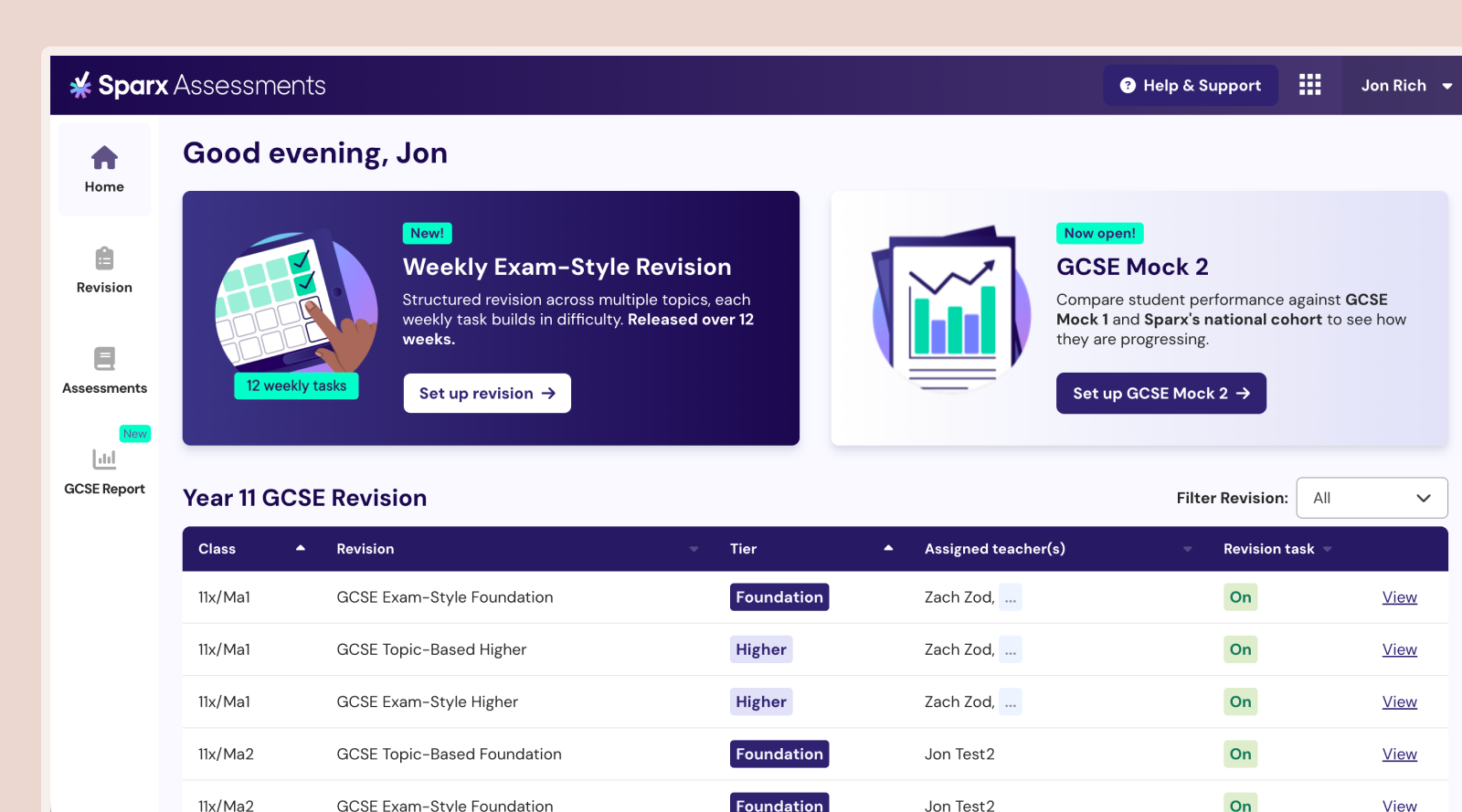

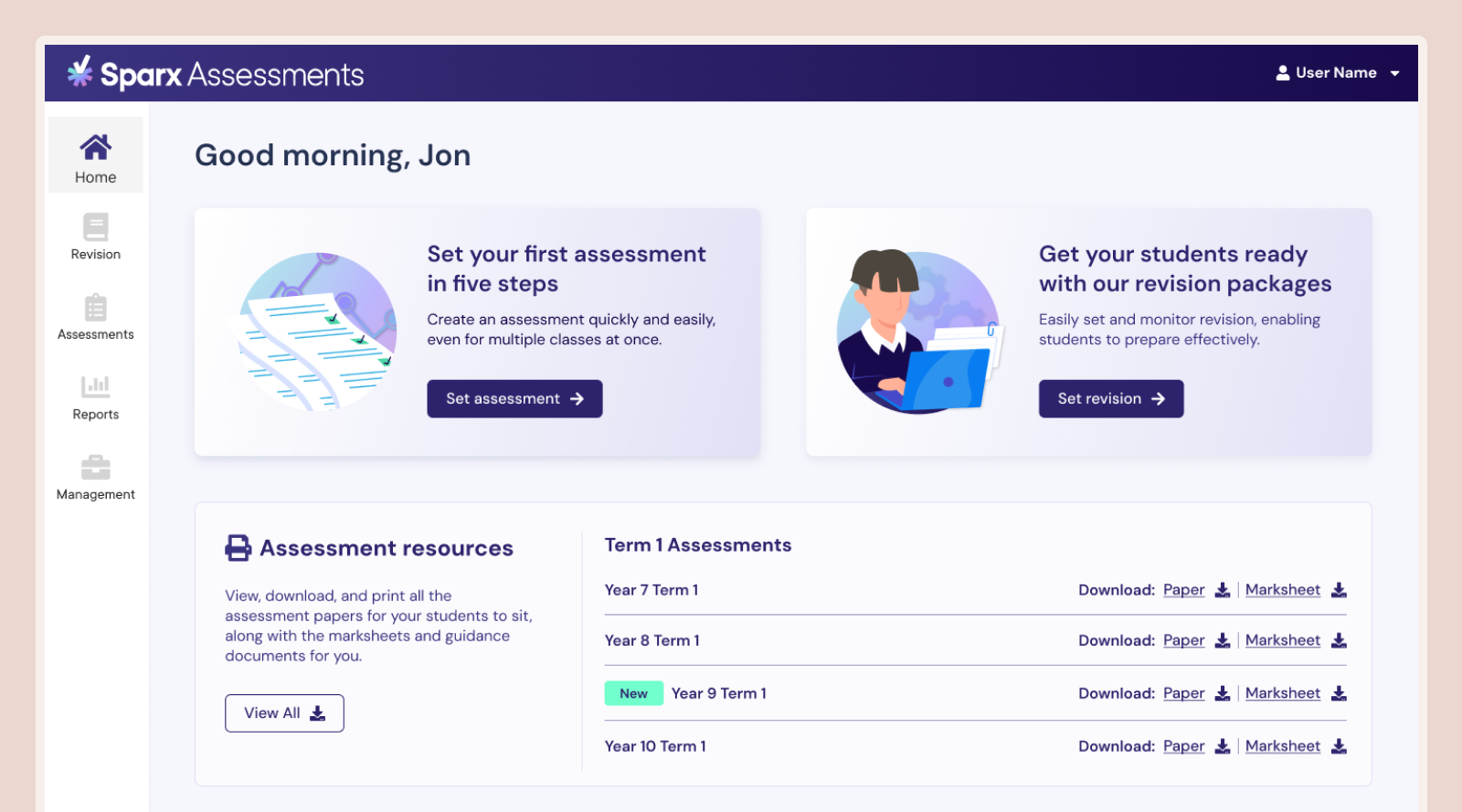

Dig deeper and there’s more: teachers see Assessments (entering marks, analyzing data, assigning fix-up work) and Revision (ongoing practice for the whole curriculum) as two distinct things.

So we split them. Completely separate areas of the product to match how teachers actually think.

Every click had to earn its place

Teachers are time-poor. Countless priorities compete for their attention. Sparx Assessments couldn’t demand more cognitive load than absolutely necessary.

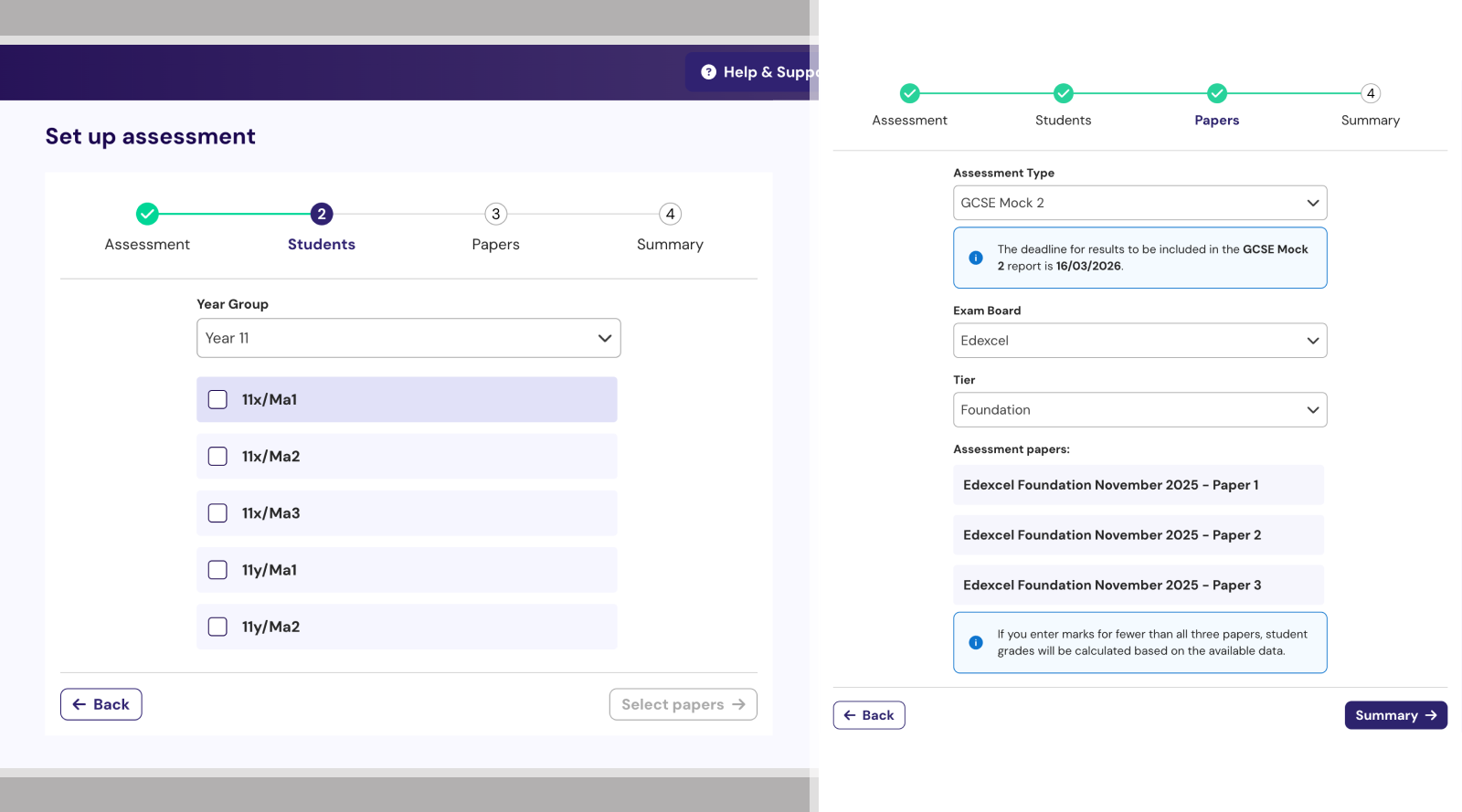

We pored over every flow. Broke setup into 4 simple steps, no more than 3 fields each.

Prototyping in production code

Six months is tight for a 0-1 product.

I prototyped using Claude Code and Cursor—building interactive prototypes that looked and felt like the real thing. Test with teachers, iterate, then hand directly to engineers with edge cases already mapped.

We extended Sparx’s design system but gave Assessments its own visual weight. It needed to feel standalone, not tacked-on.

Test, learn, repeat

I embedded continuous testing throughout the build. Every two weeks: new prototype, new round of sessions. Peaking at 25 sessions in a single fortnight.

Sometimes with the same teachers to see if fixes worked. Sometimes with new ones to catch blind spots.

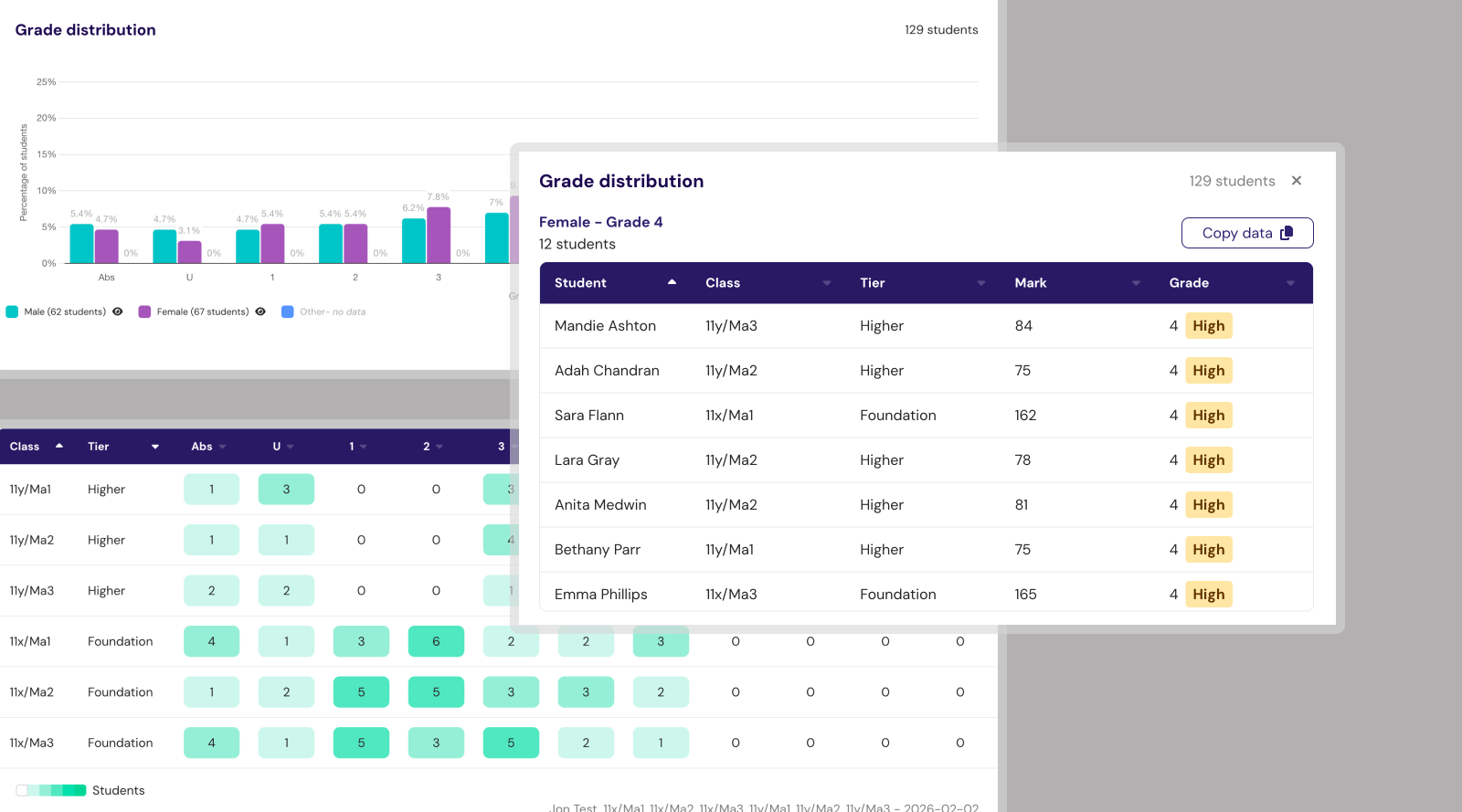

The tight loop caught issues early. Users wanted to click marksheet cells instead of using navigation. We spotted it in testing, fixed it before engineering touched it.

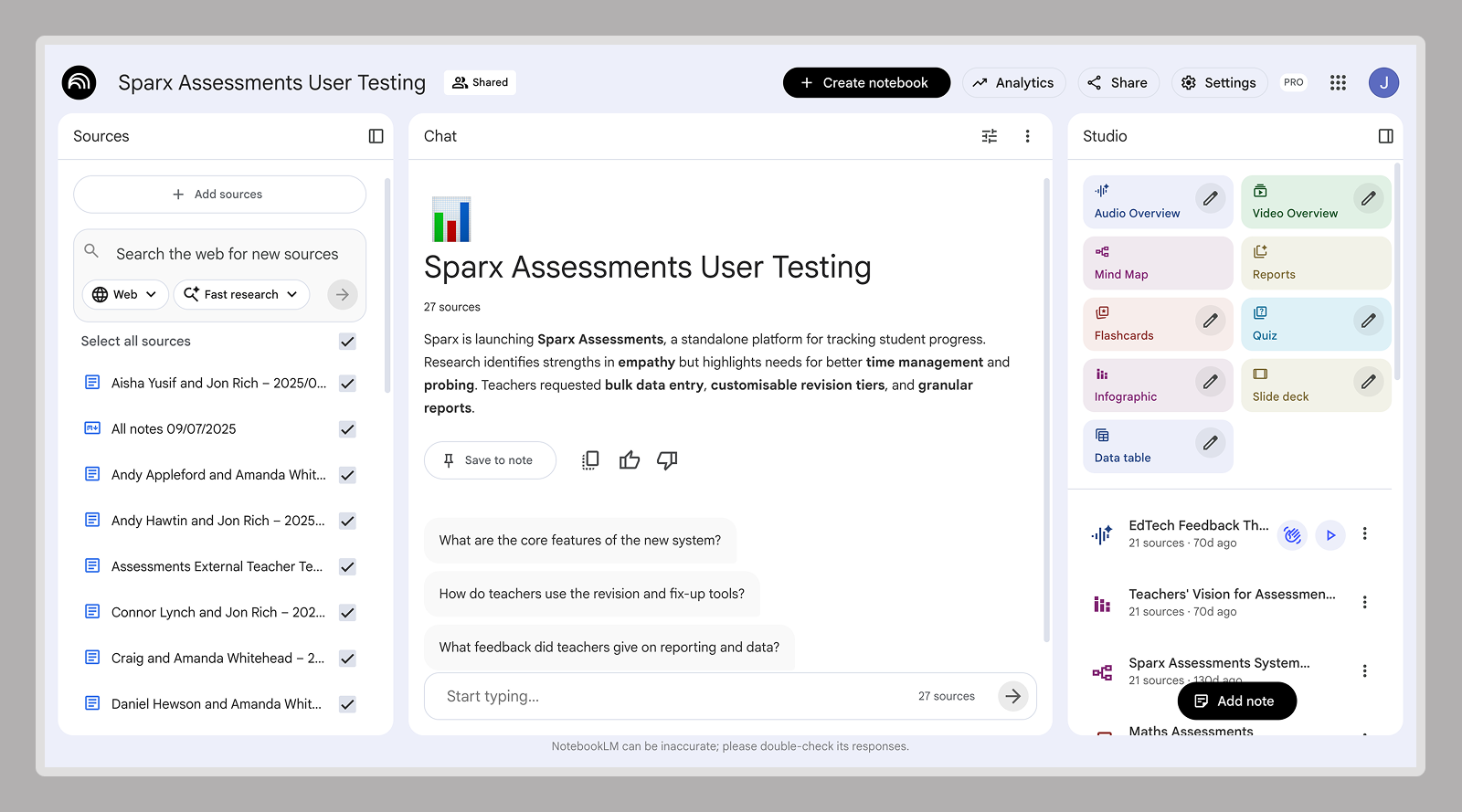

I used NotebookLM to synthesize sessions—creating a resource the whole team could query, from senior stakeholders to junior engineers.

450 schools, September 2024

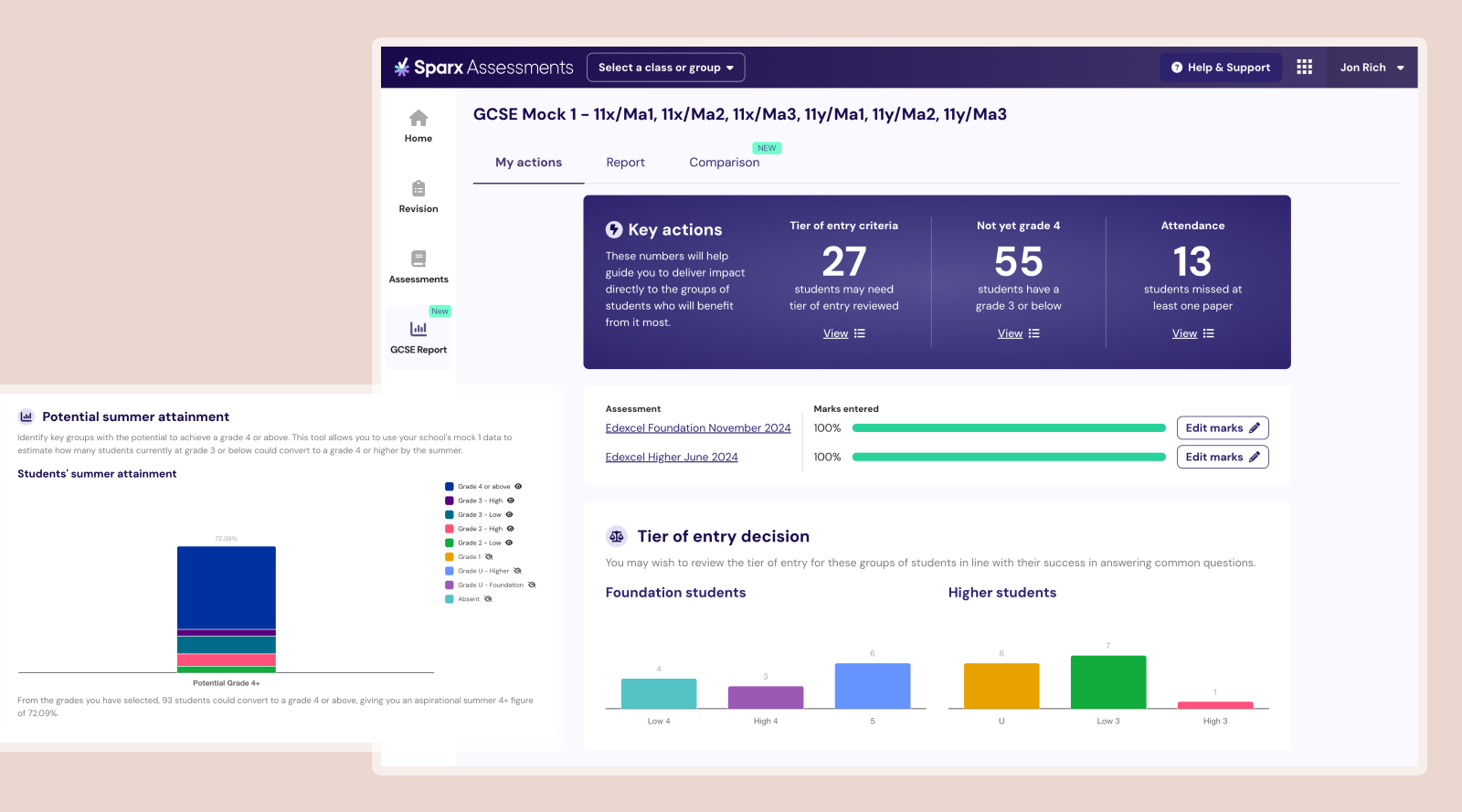

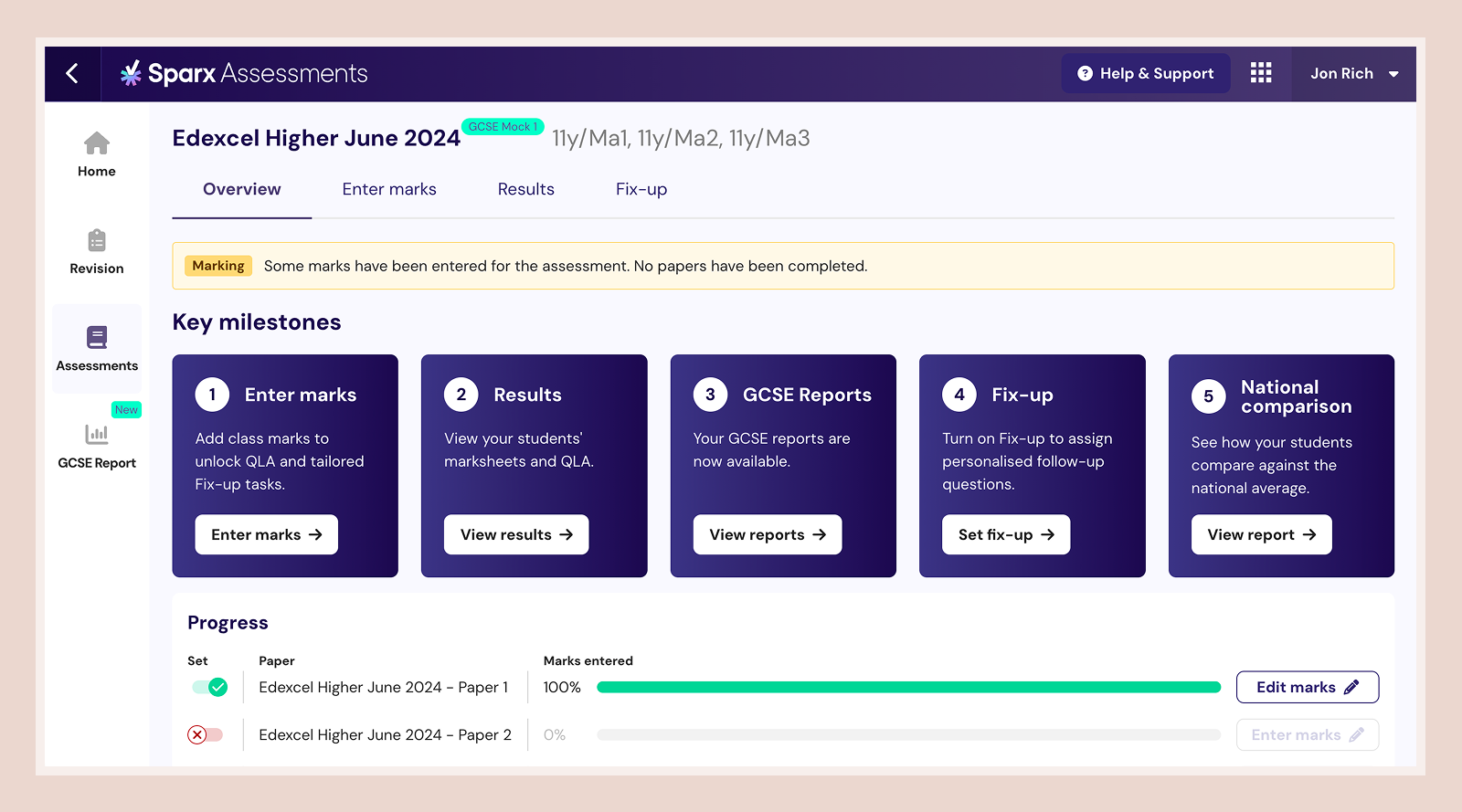

By the end of first term: 88% of teachers reported measurable workload improvements.

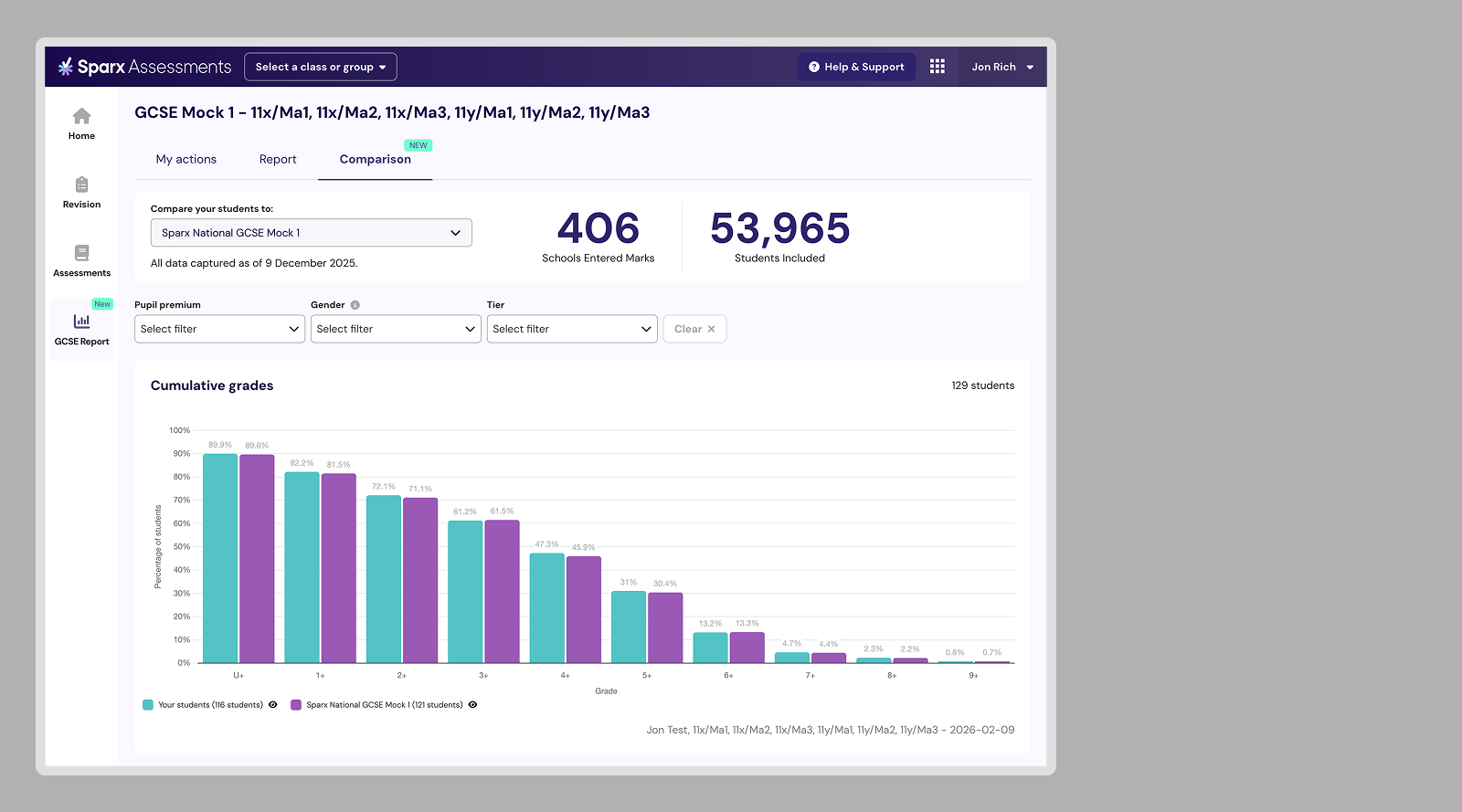

Nearly all pilot schools submitted marks to the Sparx National GCSE report—53,000 students benchmarked in real-time.

One Trust Lead told us:

“Everyone’s going to love this because my analysis is pretty much done. I don’t need to do anything. I just need to show up and show everyone this and they’ll be massively impressed.”

We pulled forward the Mock 1 launch by two weeks because schools wanted it sooner.